Pay Self-Attention to Audio-Visual Navigation

Tsinghua University (THU)

British Machine Vision Conference (BMVC), 2022

Abstract

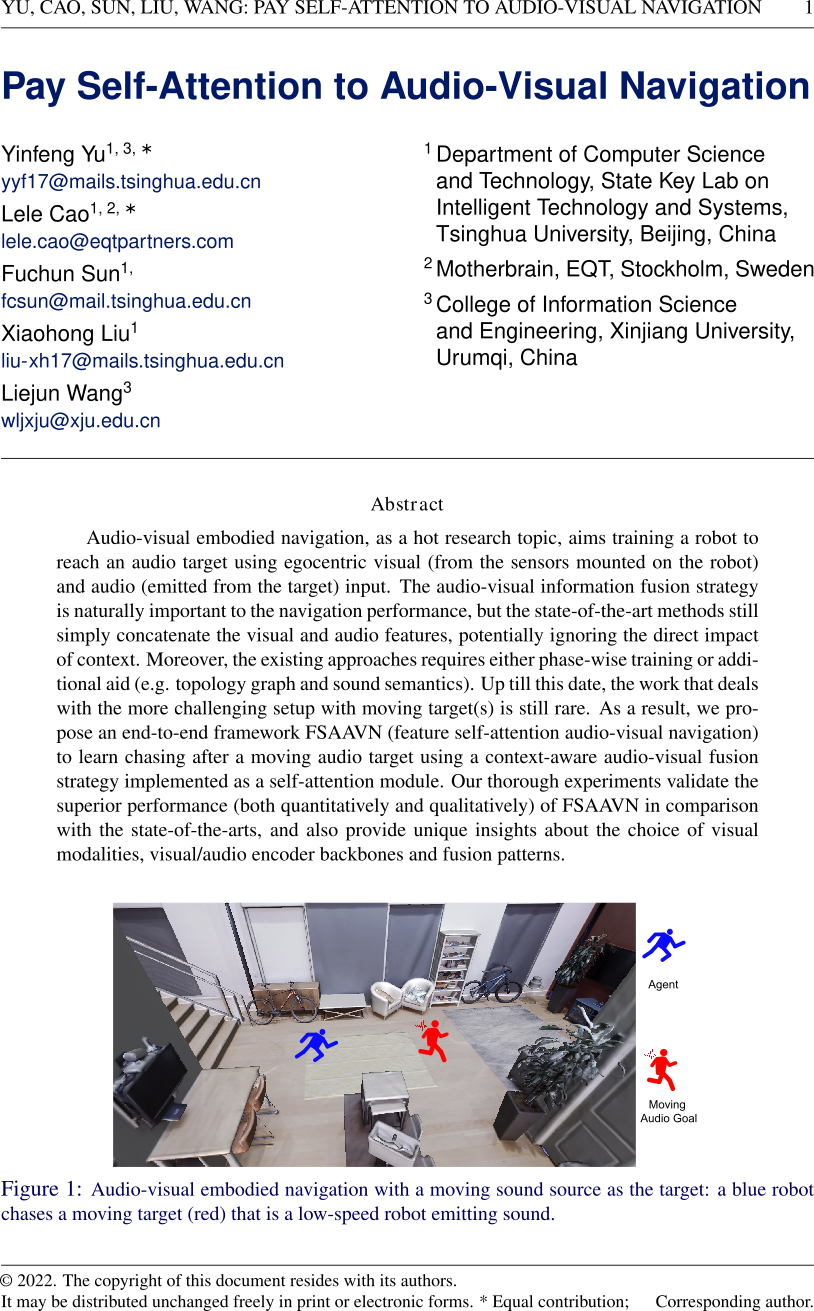

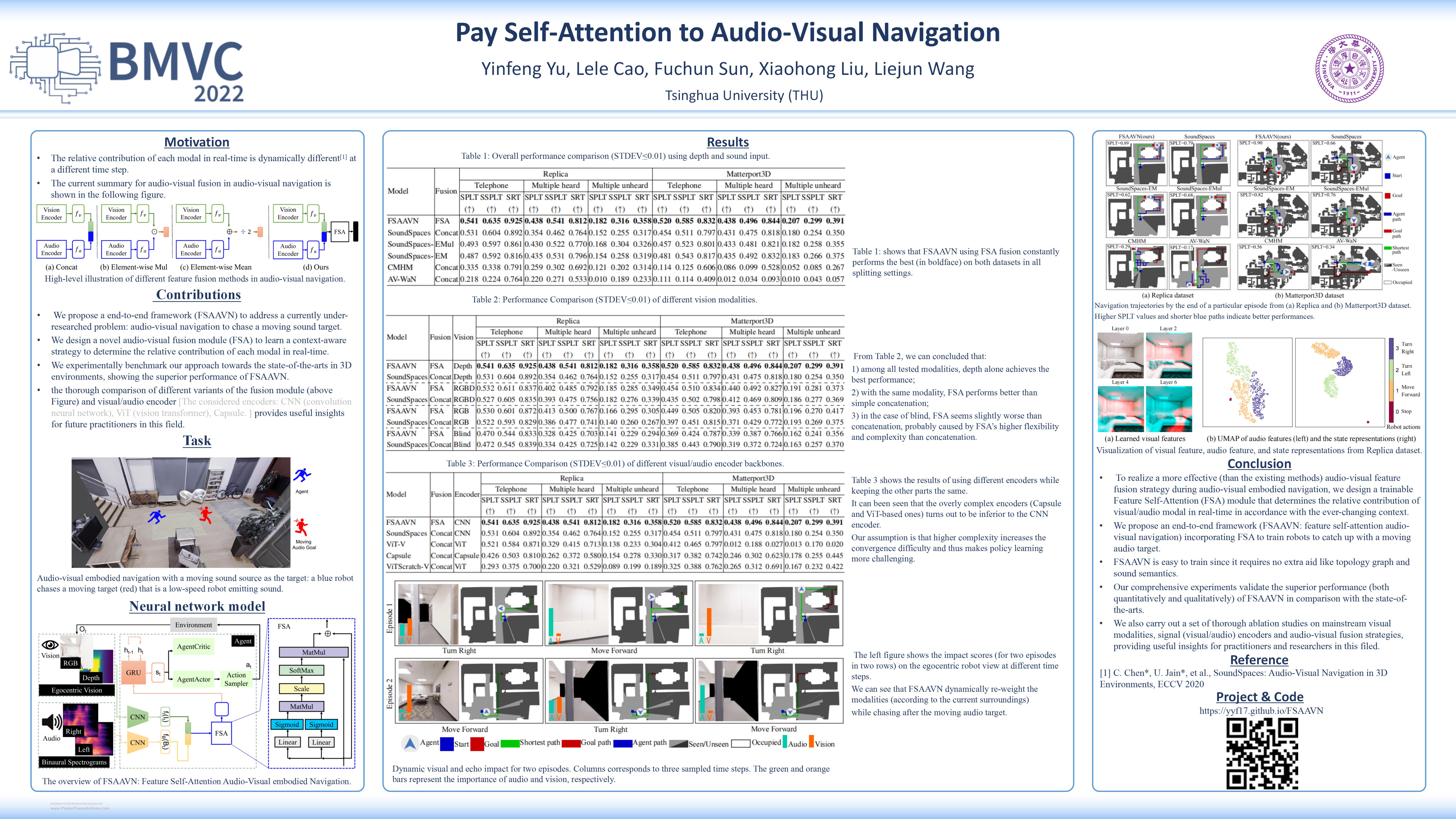

Audio-visual embodied navigation, as a hot research topic, aims training a robot to reach an audio target using egocentric visual (from the sensors mounted on the robot) and audio (emitted from the target) input. The audio-visual information fusion strategy is naturally important to the navigation performance, but the state-of-the-art methods still simply concatenate the visual and audio features, potentially ignoring the direct impact of context. Moreover, the existing approaches requires either phase-wise training or additional aid (e.g. topology graph and sound semantics). Up till this date, the work that deals with the more challenging setup with moving target(s) is still rare. As a result, we propose an end-to-end framework FSAAVN (feature self-attention audio-visual navigation) to learn chasing after a moving audio target using a context-aware audio-visual fusion strategy implemented as a self-attention module. Our thorough experiments validate the superior performance (both quantitatively and qualitatively) of FSAAVN in comparison with the state-of-the-arts, and also provide unique insights about the choice of visual modalities, visual/audio encoder backbones and fusion patterns. Project: https://yyf17.github.io/FSAAVN.

Materials

Presentation

Citation

@inproceedings{Yu_2022_BMVC,

author = {Yinfeng Yu andXinjiang University and Lele Cao and Fuchun Sun and Xiaohong Liu and Liejun Wang},

title = {Pay Self-Attention to Audio-Visual Navigation},

booktitle = {33rd British Machine Vision Conference 2022, {BMVC} 2022, London, UK, November 21-24, 2022},

year = {2022}

}

Example navigation video clips

1:Matterport3D-Depth-AV-WaN-splt0.34.mp4

2:Matterport3D-Depth-CMHM-splt0.56.mp4

3:Matterport3D-Depth-FSAAVN-splt0.90.mp4

4:Matterport3D-Depth-SoundSpaces-EM-splt0.82.mp4

5:Matterport3D-Depth-SoundSpaces-EMul-splt0.76.mp4

6:Matterport3D-Depth-SoundSpaces-splt0.66.mp4

7:Matterport3D-RGBD-FSAAVN-splt0.89.mp4

8:Matterport3D-RGBD-SoundSpaces-splt0.67.mp4

9:Replica-Depth-AV-WaN-splt0.17.mp4

10:Replica-Depth-CMHM-splt0.29.mp4

11:Replica-Depth-FSAAVN-splt0.89.mp4

12:Replica-Depth-SoundSpaces-EM-splt0.62.mp4

13:Replica-Depth-SoundSpaces-EMul-splt0.68.mp4

14:Replica-Depth-SoundSpaces-splt0.79.mp4

15:Replica-RGBD-FSAAVN-splt0.88.mp4

16:Replica-RGBD-SoundSpaces-splt0.70.mp4

Acknowledgement

This work is funded by Sino-German Collaborative Research Project Crossmodal Learning with identification number NSFC62061136001/DFG SFB/TRR169.